Relying for your perspective, the final two years have both long past by means of very slowly, or in no time. Whilst the COVID pandemic by no means perceived to finish – and technically nonetheless hasn’t – the final two years have whizzed by means of for the tech business, and particularly for NVIIDA. The corporate introduced its Ampere GPU structure simply two years in the past at GTC 2020, and after promoting extra in their chips than ever sooner than, now in 2022 it’s already time to introduce the following structure. So with out additional ado, let’s communicate concerning the Hopper structure, which is able to underpin the following technology of NVIDIA server GPUs.

As has grow to be a ritual now for NVIDIA, the corporate is the usage of its Spring GTC match to release its subsequent technology GPU structure. Presented simply two years in the past, Ampere has been NVIDIA’s maximum a hit server GPU structure thus far, with over $10B in knowledge heart gross sales in simply the final yr. And but NVIDIA has little time to leisure on their laurels, because the the expansion and profitability of the server accelerator marketplace implies that there are extra competition than ever sooner than aiming take a work of NVIDIA’s marketplace for themselves. To that finish, NVIDIA is in a position (and keen) to make use of their greatest display of the yr to discuss their subsequent technology structure, in addition to the primary merchandise that may put into effect it.

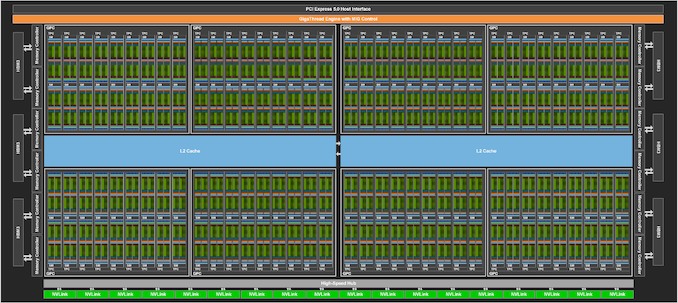

Taking NVIDIA into the following technology of server GPUs is the Hopper structure. Named after pc science pioneer Grace Hopper, the Hopper structure is an overly vital, but additionally very NVIDIA replace to the corporate’s ongoing circle of relatives of GPU architectures. With the corporate’s efforts now solidly bifurcated into server and client GPU configurations, Hopper is NVIDIA doubling down on the whole lot the corporate does properly, after which construction it even larger than ever sooner than.

Hyperbole apart, during the last a number of years NVIDIA has evolved an overly cast playbook for how you can take on the server GPU business. At the {hardware} aspect of issues that necessarily boils right down to appropriately figuring out present and long term developments in addition to buyer wishes in excessive functionality accelerators, making an investment within the {hardware} had to care for the ones workloads at nice speeds, after which optimizing the heck out of it all. And for NVIDIA, the final step could possibly be an important bit: NVIDIA places a large number of paintings into getting out of doing paintings.

That mentality, in flip, is entrance and heart for NVIDIA’s Hopper structure. Whilst NVIDIA has made investments around the board to toughen functionality, from reminiscence bandwidth and I/O to system finding out and confidential computing, the most important functionality uplifts with Hopper are within the spaces the place NVIDIA has found out how you can do much less paintings, making their processors all of the quicker.

Kicking issues off for the Hopper technology is H100, NVIDIA’s flagship server accelerator. In accordance with the GH100 GPU, GH100 is a conventional NVIDIA server-first release, with the corporate beginning on the excessive finish to broaden accelerator playing cards for his or her biggest and private pocketed server and undertaking consumers.

| NVIDIA Accelerator Specification Comparability | |||||

| H100 | A100 (80GB) | V100 | |||

| FP32 CUDA Cores | 16896 | 6912 | 5120 | ||

| Tensor Cores | 528 | 432 | 640 | ||

| Spice up Clock | ~1.78GHz (Now not Finalized) |

1.41GHz | 1.53GHz | ||

| Reminiscence Clock | 4.8Gbps HBM3 | 3.2Gbps HBM2e | 1.75Gbps HBM2 | ||

| Reminiscence Bus Width | 5120-bit | 5120-bit | 4096-bit | ||

| Reminiscence Bandwidth | 3TB/sec | 2TB/sec | 900GB/sec | ||

| VRAM | 80GB | 80GB | 16GB/32GB | ||

| FP32 Vector | 60 TFLOPS | 19.5 TFLOPS | 15.7 TFLOPS | ||

| FP64 Vector | 30 TFLOPS | 9.7 TFLOPS (1/2 FP32 fee) |

7.8 TFLOPS (1/2 FP32 fee) |

||

| INT8 Tensor | 2000 TOPS | 624 TOPS | N/A | ||

| FP16 Tensor | 1000 TFLOPS | 312 TFLOPS | 125 TFLOPS | ||

| TF32 Tensor | 500 TFLOPS | 156 TFLOPS | N/A | ||

| FP64 Tensor | 60 TFLOPS | 19.5 TFLOPS | N/A | ||

| Interconnect | NVLink 4 18 Hyperlinks (900GB/sec) |

NVLink 3 12 Hyperlinks (600GB/sec) |

NVLink 2 6 Hyperlinks (300GB/sec) |

||

| GPU | GH100 (814mm2) |

GA100 (826mm2) |

GV100 (815mm2) |

||

| Transistor Depend | 80B | 54.2B | 21.1B | ||

| TDP | 700W | 400W | 300W/350W | ||

| Production Procedure | TSMC 4N | TSMC 7N | TSMC 12nm FFN | ||

| Interface | SXM5 | SXM4 | SXM2/SXM3 | ||

| Structure | Hopper | Ampere | Volta | ||

Forward of nowadays’s keynote presentation – which as this newsletter is going up, remains to be occurring – NVIDIA presented a press pre-briefing on Hopper. In conventional NVIDIA model, the corporate has been very selective about the main points launched up to now (least it will get leaked forward of Jensen Huang’s keynote). So we will’t make a totally apples-to-apples comparability to A100 rather but, as we don’t have the entire specs. However according to this pre-briefing, we will no doubt tease out some attention-grabbing highlights about NVIDIA’s structure.

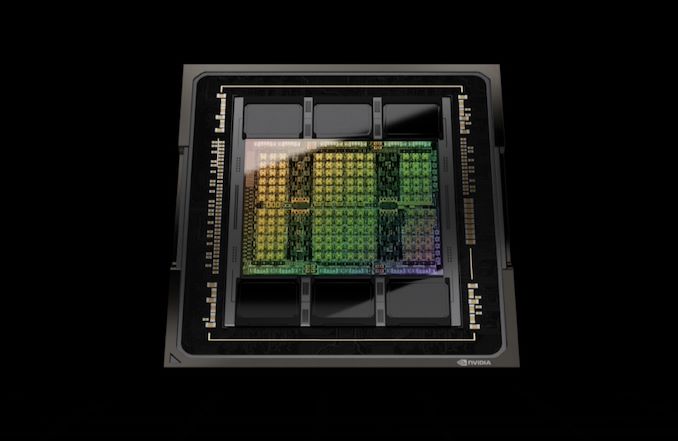

Before everything, NVIDIA is as soon as once more construction massive for his or her flagship GPU. The GH100 GPU is made out of 80 billion transistors and is being constructed on what NVIDIA is looking a “customized” model of TSMC’s 4N procedure node, an up to date model of TSMC’s N5 generation that gives higher energy/functionality traits and an overly modest growth in density. So even at simply two years after Ampere, NVIDIA is creating a complete node soar after which some for GH100. At this level NVIDIA isn’t disclosing die sizes, so we don’t have actual figures to proportion. However given the identified density enhancements of TSMC’s procedure nodes, GH100 must be shut in measurement to the 826mm2 GA100. And certainly, it’s, at 814mm2.

Like NVIDIA’s earlier sever accelerators, the H100 card isn’t transport with a fully-enabled GPU. So the figures NVIDIA is offering are according to H100 as carried out, with on the other hand many purposeful devices (and reminiscence stacks) are enabled.

With regard to functionality, NVIDIA isn’t quoting any figures for same old vector functionality upfront. They’re on the other hand quoting tensor functionality, which relying at the layout is both 3x or 6x quicker than the A100 accelerator. We’ll see how this breaks down between clockspeed will increase and both bigger or further tensor cores, however obviously NVIDIA is as soon as once more throwing much more {hardware} at tensor functionality, a method that has labored out properly for them up to now.

Formally, NVIDIA loves to quote figures with sparsity enabled, however for the needs of our spec sheet I’m the usage of the non-sparse numbers for a extra apples-to-apples comparability with earlier NVIDIA {hardware}, in addition to competing {hardware}. With sparsity enabled, TF32 functionality and on down will also be doubled.

Reminiscence bandwidth may be making improvements to considerably over the former technology, with H100 providing 3TB/2d of bandwidth there. The rise in bandwidth this time round comes because of the usage of HBM3, with NVIDIA changing into the primary accelerator seller to make use of the latest-generation model of the excessive bandwidth reminiscence. H100 will include 6 16GB stacks of the reminiscence, with 1 stack disabled. The online result’s 80GB of HBM3 working at an information fee of four.8Gbps/pin, and hooked up to a 5120-bit reminiscence bus.

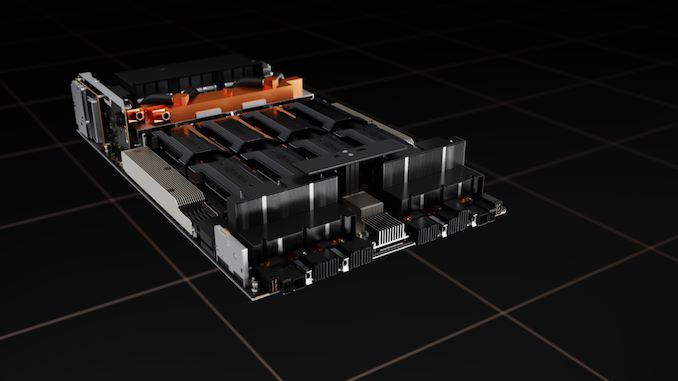

NVIDIA shall be providing H100 of their same old two shape components: SXM mezzanine for prime functionality servers, and a PCIe card for extra mainstream servers. The ability necessities for each shape components have long past up considerably over the former technology. NVIDIA is quoting an eye-popping 700 Watt TDP for the SXM model of the cardboard, 75% upper than the reliable 400W TDP of the A100. For higher or worse, NVIDIA is preserving not anything again right here, regardless that the continued decline in transistor energy scaling isn’t doing NVIDIA any favors, both.

Cooling one of these scorching GPU shall be an enchanting activity, regardless that now not past present generation. At those energy ranges we’re virtually no doubt having a look at liquid cooling, one thing the SXM shape issue is well-suited for. Nonetheless, it’s value noting that the rival OAM shape issue – necessarily the Open Compute Mission’s tackle SXM to be used in accelerators – is designed to best out at 700W. So NVIDIA is outwardly coming near the higher limits of what even a mezzanine taste card can care for, assuming that sever distributors don’t hotel to unique cooling strategies.

In the meantime the H100 PCie card will see its TDP raised to 350W, from 300W nowadays. For the reason that 300W is the normal restrict for PCIe playing cards, it’ll be attention-grabbing to peer how NVIDIA and their companions stay the ones playing cards cool. In a different way, with simply part the TDP of the SXM card, we’re anticipating the PCIe model to be clocked/configured noticeably slower with the intention to mood the cardboard’s energy intake.

Contents

- 1 Hopper Tensor Cores: Now With Transformer Engines

- 2 DPX Directions: Dynamic Programming for GPUs

- 3 Confidential Computing: Protective GPU Knowledge From Prying Eyes

- 4 Multi-Example GPU v2: Now With Isolation

- 5 NVLink 4: Extending Chip I/O Bandwidth to 900GB/sec

- 6 NVIDIA HGX Rides Once more: HGX For H100

- 7 Hopper H100 Accelerators: Transport In Q3 2022

Hopper Tensor Cores: Now With Transformer Engines

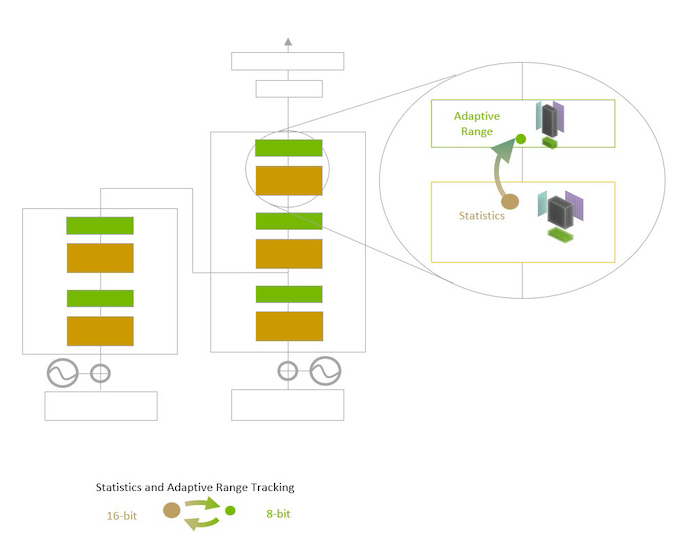

Shifting directly to the big-ticket architectural options of the Hopper structure, we’re get started with NVIDIA’s Transformer Engines. Residing as much as their identify, the transformer engines are a brand new, extremely specialised form of tensor core, which can be designed to additional boost up transformer ML fashions.

In line with NVIDIA’s center of attention on system finding out, for the Hopper structure the corporate has taken a recent take a look at the make-up of the ML marketplace, and what workloads are well-liked and/or probably the most difficult on present {hardware}. The winner, on this regard, has been transformers, a kind of deep finding out style that experience risen in recognition somewhat temporarily because of their application in herbal language processing and pc imaginative and prescient. Contemporary developments in transformer generation, such because the GPT-3 style, in conjunction with call for from provider operators for higher herbal language processing, have made transformers the most recent massive step forward in ML.

However on the identical time, the processing necessities for transformers also are hampering the improvement of even higher fashions. In brief, higher fashions require an ever-larger choice of parameters, and at over 175 billion parameters for GPT-3 on my own, coaching occasions for transformers are changing into unwieldy, even on huge GPU clusters.

To that finish, NVIDIA has evolved a variant of the tensor core in particular for rushing up transformer coaching and inference, which they have got dubbed the Transformer Engine. NVIDIA has optimized this new unit by means of stripping it down to only processing the decrease precision knowledge codecs utilized by maximum transformers (FP16), after which scaling issues down much more with the creation of an FP8 layout as properly. The purpose with the brand new devices, briefly, is to make use of the minimal precision important at each step to coach transformers with out shedding accuracy. In different phrases, to steer clear of doing extra paintings than is important.

With that stated, in contrast to extra conventional neural community fashions which might be skilled at a hard and fast precision all through, NVIDIA’s recent hack for transformers is to alter the precision, since FP8 can’t be used all through a style. Because of this, Hopper’s transformer engines can change between FP16 and FP8 coaching on a layer by means of layer foundation, using NVIDIA-provided heuristics that paintings to make a choice the bottom precision wanted. The online get advantages is that each layer that may be processed at FP8 will also be processed two times as speedy, because the transformer engines can pack and procedure FP8 knowledge two times as temporarily as FP16.

Blended with the extra reminiscence on H100 and the quicker NVLink 4 I/O, and NVIDIA claims that a huge cluster of GPUs can teach a transformer as much as 9x quicker, which might carry down coaching occasions on nowadays’s biggest fashions right down to a extra affordable time period, and make even bigger fashions simpler to take on.

In the meantime, at the inference aspect of issues, Hopper too can straight away eat its personal FP8 skilled fashions for inference use. That is a very powerful difference for Hopper, because it permits consumers to differently skip changing and optimizing a skilled transformer style right down to INT8. NVIDIA isn’t claiming any particular functionality advantages from sticking with FP8 over INT8, however it method builders can revel in the similar functionality and reminiscence utilization advantages of working inference on an INT8 style with out the previously-required conversion step.

In any case, NVIDIA is claiming anyplace between a 16x and 30x building up in transformer inference functionality on H100 as opposed to A100. Like their coaching claims, that is an H100 cluster as opposed to an A100 cluster, so reminiscence and I/O enhancements also are enjoying an element right here, however it none the fewer underscores that H100’s transformer engines aren’t only for rushing up coaching.

DPX Directions: Dynamic Programming for GPUs

NVIDIA’s different massive smart-and-lazy growth for the Hopper structure comes courtesy of the sphere of dynamic programming. For his or her recent technology of generation, NVIDIA is including beef up for the programming style by means of including a brand new set of directions only for dynamic programming. The corporate is looking those DPX Directions.

Dynamic programming, in a nutshell, is some way of breaking down advanced issues into smaller, more practical issues in a recursive method, after which fixing the ones smaller issues first. The important thing function of dynamic programming is if a few of these sub-problems are equivalent, then the ones redundancies will also be known and eradicated – which means a sub-problem will also be solved as soon as, and its effects stored for long term use throughout the bigger challenge.

All of which is to mention that, like Sparsity and Transformer Engines, NVIDIA is imposing dynamic programming to permit their GPUs to get out of doing extra paintings. Through getting rid of the redundant portions of workloads that may be damaged up consistent with the foundations of dynamic programming, it’s that a lot much less paintings NVIDIA’s GPUs wish to do, and that a lot quicker they are able to produce effects.

Although in contrast to Transformer Engines, including dynamic programming beef up by the use of the DPX Directions isn’t such a lot about rushing up present workloads on GPUs as it’s enabling new workloads on GPUs. Hopper is the primary NVIDIA structure to beef up dynamic programming, so workloads that may be resolved with dynamic programming are usually run on CPUs and FPGAs. In that admire, that is NVIDIA discovering yet another workload they are able to scouse borrow from CPUs and run on a GPU as an alternative.

General, NVIDIA is claiming a 7x growth in dynamic programming set of rules functionality on a unmarried H100 as opposed to naïve execution on an A100.

As for the real-world implications of DPX Directions, NVIDIA is bringing up direction making plans, knowledge science, robotics, and biology as all being possible beneficiaries of the brand new generation. Those fields already use a number of well known dynamic programming algorithms, comparable to Smith-Waterman and Flyod-Warshall, which ranking genetic collection aligning and to find the shortest distances between pairs of locations respectively.

General, dynamic programming is without doubt one of the extra area of interest fields amongst excessive functionality workloads. Nevertheless it’s person who NVIDIA believes could be a excellent are compatible for GPUs as soon as the suitable {hardware} beef up is to be had.

Confidential Computing: Protective GPU Knowledge From Prying Eyes

Moving clear of performance-focused options, NVIDIA’s different massive push with the Hopper structure is at the safety entrance. With the growth of GPU utilization in cloud computing environments – and particularly shared VM environments – the corporate is taking a brand new center of attention at the safety issues that includes, and how you can safe shared methods.

The outcome of the ones efforts is that Hopper is introducing {hardware} beef up for depended on execution environments. Particularly, Hopper helps the introduction of what NVIDIA is terming a confidential digital system, the place the entire knowledge throughout the VM surroundings is safe, and the entire knowledge getting into (and leaving) the surroundings is encrypted.

NVIDIA didn’t pass over too most of the technical main points underpinning their new safety features in our-pre-briefing, however in keeping with the corporate it’s a made of a mixture of new {hardware} and instrument options. Of specific observe, knowledge encryption/decryption when transferring to and from the GPU is speedy sufficient to be accomplished on the PCIe line fee (64GB/sec), which means there’s no slowdown when it comes to sensible host-to-GPU bandwidth when the usage of this safety function.

This depended on execution surroundings, in flip, is designed to withstand all types of tampering. The reminiscence contents throughout the GPU itself are secured by means of what NVIDIA is terming a “{hardware} firewall”, which prevents out of doors processes from touching them, and this identical coverage is prolonged to knowledge in-flight within the SMs as properly. The depended on surroundings may be stated to be secured in opposition to the OS or the hypervisor gaining access to the contents of the GPU from above, limiting get right of entry to to only the landlord of the VM. Which is to mention that, even with bodily get right of entry to to the GPU, it shouldn’t be conceivable to get right of entry to the information inside of a safe VM on hopper.

In the long run, NVIDIA’s goal right here seems to be making/holding their consumers comfy the usage of GPUs to procedure delicate knowledge by means of making them a lot {hardware} to wreck into once they’re operating in a secured mode. This, in flip, is not just to give protection to historically delicate knowledge, comparable to scientific knowledge, but additionally to give protection to the type of high-value AI fashions that a few of NVIDIA’s consumers are actually growing. Given the entire paintings that may pass into growing and coaching a style, consumers don’t need their fashions getting copied, be it in a shared cloud surroundings or being pulled out of a bodily insecure edge tool.

Multi-Example GPU v2: Now With Isolation

As an extension of NVIDIA’s safety efforts with confidential computing, the corporate has additionally prolonged those protections to their Multi-Example GPU (MIG) surroundings. MIG cases can now be solely remoted, with I/O between the example and the host solely virtualized and secured as properly, necessarily granting MIG cases the similar safety features as H100 general. General, this strikes MIG nearer to CPU virtualization environments, the place the quite a lot of VMs think to not consider every different and are stored remoted.

NVLink 4: Extending Chip I/O Bandwidth to 900GB/sec

With the Hopper structure additionally comes a brand new rendition of NVIDIA’s NVLink high-bandwidth interconnect for wiring up GPUs (and shortly, CPUs) in combination for higher functionality in workloads that may scale out over a couple of GPUs. NVIDIA has iterated on NVLink with each technology in their flagship GPU, and this time isn’t any other, with the creation of NVLink 4.

Whilst we’re waiting for a complete disclosure of technical specs from NVIDIA, the corporate has showed that NVLink bandwidth on a per-chip foundation has been higher from 600GB/2d on A100 to 900GB/2d for H100. Observe that that is the sum overall of all upstream and downstream bandwidth throughout the entire particular person hyperlinks that NVLink helps, so lower those figures in part to get particular transmit/obtain charges.

| NVLink Specification Comparability | |||||

| NVLink 4 | NVLink 3 | NVLink 2 | |||

| Signaling Charge | 100 Gbps | 50 Gbps | 25 Gbps | ||

| Lanes/Hyperlink | 2 | 4 | 8 | ||

| Bandwidth/Route/Hyperlink | 25 GB/sec | 25 GB/sec | 25 GB/sec | ||

| Overall Bandwidth/Hyperlink | 50 GB/sec | 50 GB/sec | 50 GB/sec | ||

| Hyperlinks/Chip | 18 (GH100) |

12 (GA100) |

6 (GV100) |

||

| Bandwidth/Chip | 900 GB/sec | 600 GB/sec | 300 GB/sec | ||

900GB/sec represents a 50% building up in I/O bandwidth for H100. Which isn’t as nice an building up as H100’s overall processing throughput, however a practical growth given the ever-escalating complexities in imposing quicker networking charges.

For the reason that NVLink 3 used to be already working at a 50 Gbit/sec signaling fee, it’s now not transparent if the extra bandwidth is courtesy of a fair quicker signaling fee, or if NVIDIA has as soon as once more adjusted the choice of hyperlinks coming from the GPU. NVIDIA formerly altered the NVLink lane configuration for A100, once they halved the lane width and doubled the choice of lanes, all whilst doubling the signaling fee. Including lanes on best of that suggests now not having to determine how you can toughen the signaling fee by means of much more, however it additionally method a 50% building up within the choice of pins wanted for NVLink I/O.

Alongside the ones traces, it’s additionally value noting that NVIDIA is including PCIe 5.0 beef up with Hopper. As PCIe remains to be used for host-to-GPU communications (till Grace is in a position, a minimum of), this implies NVIDIA has doubled their CPU-GPU bandwidth, permitting them to stay H100 that significantly better fed. Although hanging PCIe 5.0 to excellent use goes to require a number CPU with PCIe 5.0 beef up, which isn’t one thing AMD or Intel are offering rather but. Possibly, any person can have {hardware} in a position and transport by the point NVIDIA ships H100 in Q3, particularly since NVIDIA is keen on homogenization for his or her DGX pre-built servers.

In any case, with the release of H100/NVLink 4, NVIDIA may be the usage of this time to announce a brand new, exterior NVLink transfer. This exterior transfer extends past NVIDIA’s present on-board NVSwitch capability, which is used to lend a hand construct extra advanced GPU topologies inside of a unmarried node, and permits H100 GPUs to at once be in contact with every different throughout a couple of nodes. In essence, it’s a alternative for having NVIDIA GPUs undergo Infiniband networks with the intention to be in contact cross-node.

The exterior NVLInk Transfer permits for as much as 256 GPUs to be attached in combination inside of a unmarried area, which goes out to 32 8-way GPU nodes. Consistent with NVIDIA, a unmarried, 1U NVLink Transfer gives 128 lanes of NVLink by the use of 32 Octal SFP (OSFP) transceivers. The overall Transfer, in flip, gives a complete bisection bandwidth of 70.4TB/2d.

It’s value noting, on the other hand, that the NVLink Transfer isn’t a wholesale alternative for Infiniband – which after all, NVIDIA additionally sells via its networking {hardware} department. Infiniband connections between nodes are nonetheless wanted for different varieties of communications (e.g. CPU to CPU), so exterior NVLink networks are a complement to Infiniband, permitting H100 GPUs to at once chat among themselves.

NVIDIA HGX Rides Once more: HGX For H100

Remaining, however now not least, NVIDIA has showed that they’re updating their HGX baseboard ecosystem for H100 as properly. A staple of NVIDIA’s multi-GPU designs since they first started the usage of the SXM shape issue for GPUs, HGX baseboards are NVIDIA-produced GPU baseboards for device developers to make use of in designing entire multi-GPU methods. The HGX forums give you the complete connection and mounting surroundings for NVIDIA’s SXM shape issue GPUs, after which sever distributors can direction energy and PCIe knowledge (amongst different issues) from their motherboards to the HGX baseboard. For the present A100 technology, NVIDIA has been promoting 4-way, 8-way, and 16-way designs.

Relative to the GPUs themselves, HGX is somewhat unexciting. Nevertheless it’s a very powerful a part of NVIDIA’s ecosystem. Server companions can pickup an HGX board and GPUs, after which temporarily combine that right into a server design, somewhat than having to design their very own server from scratch. Which with regards to H100, implies that establishment will (in large part) reign, and that NVIDIA’s server companions will be capable to bring together methods in the similar method as sooner than.

Hopper H100 Accelerators: Transport In Q3 2022

Wrapping issues up, NVIDIA is making plans on having H100-equipped methods to be had in Q3 of this yr. This may increasingly come with NVIDIA’s complete suite of self-built methods, together with DGX and DGX SuperPod servers, in addition to servers from OEM companions the usage of HGX baseboards and PCIe playing cards. Although in standard model, NVIDIA isn’t pronouncing particular person H100 pricing, bringing up the truth that they promote this {hardware} via server companions. We’ll have somewhat extra perception as soon as NVIDIA declares the costs of their very own DGX methods, however suffice it to mention, don’t be expecting H100 playing cards to return affordable.

Supply Through https://www.anandtech.com/display/17327/nvidia-hopper-gpu-architecture-and-h100-accelerator-announced